What is RAG?

Retrieval-Augmented Generation (RAG) is a powerful technique in AI that combines the strengths of information retrieval and generative models. Instead of relying solely on a language model’s pre-trained knowledge, RAG first retrieves relevant information from a knowledge base and then uses that context to generate more accurate and up-to-date responses.

Why is RAG important? Traditional language models can hallucinate or provide outdated information. RAG addresses this by grounding responses in real, external data, making AI systems more reliable for tasks like question-answering, chatbots, and knowledge assistants.

How RAG Works

RAG typically involves two main steps:

- Retrieval: Search for relevant documents or data chunks based on the user’s query.

- Generation: Feed the retrieved information as context to a language model, which generates a response.

Common tools for RAG include vector databases (e.g., Pinecone, Chroma) for efficient similarity search, and embedding models to convert text into vectors. In our example we will use a simple JSON file for simplicity.

Our Simple RAG Example

In this post, we’ll build a basic RAG system using Ollama, a local AI platform. Our example is a contact lookup chatbot: users can ask questions like “What’s Peter’s phone number?” and the system retrieves the relevant contact info before generating a response.

We are going to extend the example from this blog post. You can download the full source code here: GitHub

Key Components

- Data: A JSON file with 100+ contacts (name, phone, email, address).

- Embeddings: We use Ollama’s

mxbai-embed-largemodel to convert contact details into vectors. - Similarity Search: Cosine similarity to find the best-matching contact.

- Generation: Ollama’s

llama3model generates responses using the retrieved contact as context. - Intent Detection: A simple keyword-based check to decide if a query needs RAG or just general chat.

Step-by-Step Implementation

Prepare Data:

- Create

contacts.jsonwith contact details or use the example data provided with the source code. - Run

build_embeddings.jsto generate embeddings for each contact using the full text (name + phone + email + address).

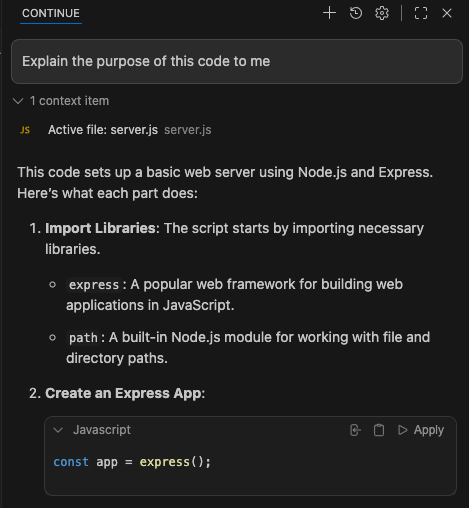

Server Setup (server.js):

- Handle POST requests to

/rag. - Detect if the query is contact-related (using keywords like “phone”, “email”).

- If yes: Generate query embedding, find the most similar contact via cosine similarity, build context, and call the LLM.

- If no: Direct LLM call without context.

Cosine Similarity:

- Measures vector similarity (0 to 1, where 1 is identical).

- Used to rank contacts by relevance to the query.

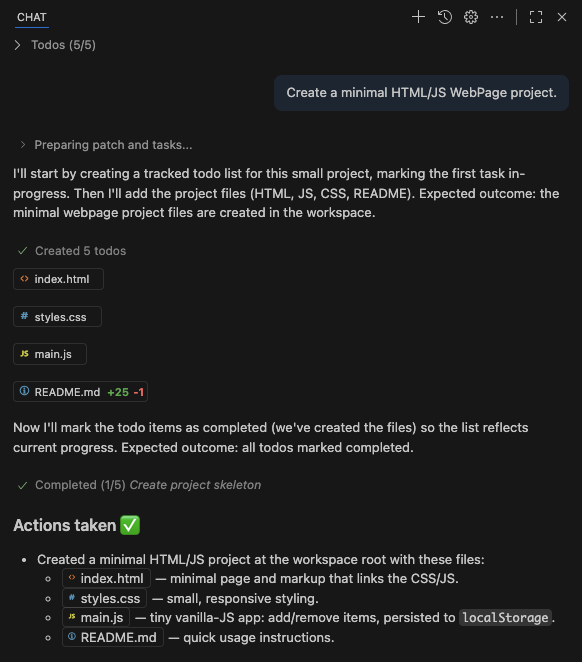

Frontend (index.html, main.js):

- Simple chat interface.

- Sends queries to

/ragand displays responses.

Running the Example

- Install Ollama and pull models:

ollama pull mxbai-embed-largeandollama pull llama3. - Run

node build_embeddings.jsto prepare data. - Start server:

node server.js. - Open

http://localhost:8000and chat!

Example queries:

- “What’s Anna’s email?” → Retrieves Anna’s contact and generates a response.

- “Tell a joke.” → General LLM response.

Conclusion

This example demonstrates RAG’s core principles in under 200 lines of code. It’s not production-ready (no error handling, security, JSON instead of a DB), but perfect for learning.

RAG bridges the gap between retrieval and generation, making AI more factual and context-aware. Our contact chatbot shows how easy it is to implement with local tools like Ollama.

Full code: GitHub